|

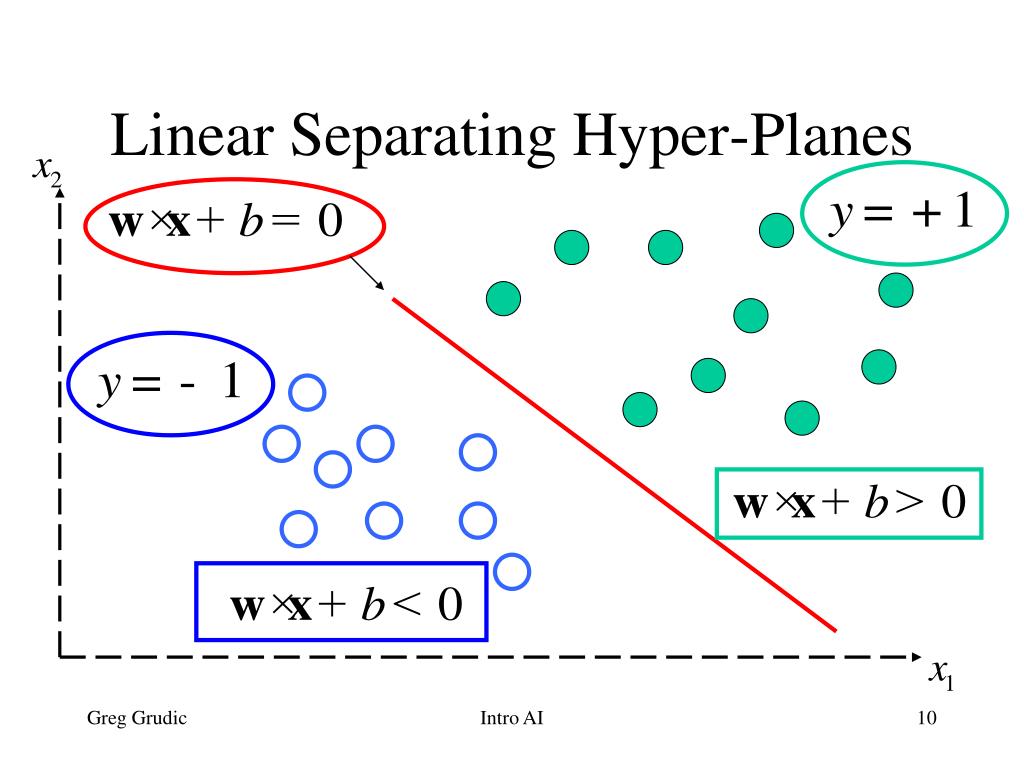

Every point in l is written as p t for some t R. Then we must draw a line l from p in the direction of and compute the distance between p and q l H. It is rather the output of the decision function $\sum_$. Geometrically is a normal vector to the hyperplane H. The equation for the plane determined by N and Q is A ( x x 0) B ( y y 0) C ( z z 0) 0, which we could write as A x B y C z D 0, where D A.

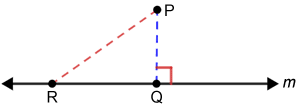

The $\alpha$ value that you get from libsvm has nothing to do with the $\alpha$ in the decision function. Heres a quick sketch of how to calculate the distance from a point P ( x 1, y 1, z 1) to a plane determined by normal vector N ( A, B, C) and point Q ( x 0, y 0, z 0). In that sense, support vectors do not belong to the class with high probability because they either are the ones closest to or on the wrong side of the hyperplane. (Note that this Let Pl be the hyperplane consisting of the set of points x hyperplane is in fact a line, since it is 1-dimensional. w of the vectors v and w.) Perpendicular Distance to Plane 4 points possible (graded) ti for which 3x1 x2-1 0. To answer your question more specificly: The idea in SVMs indeed is that the further a test vector is from the hyperplane the more it belongs to a certain class (except when it's on the wrong side of course). Use to denote the dot product of two vectors, e.g. John Platt: Probabilistic outputs for Support Vector Machines and Comparison to Regularized Likelihood Methods (NIPS 1999): The most notable one, which is-I think-also implemented in libsvm is: In the following instance, hyperplane C is the optimal one that enables the maximal sum of the distance between the nearest data point in the positive side. However, if you do that with the loss-function of the SVM, the log-likelihood is not a normalizeable probabilistic model. Transcribed image text: Find the (perpendicular) distance between the point (3, 2, 0) and the plane through the origin spanned by v (2, 4, 0) and w (0, 1, -1). You get to the Gaussian likelihood from the loss function by flipping its sign and exponentiating it. We can describe the points lying on this hyperplane using the equation w x b 0, where w w1 ,w 2 .,w n is normal (i.e., perpendicular) to the. However this is equivalent to maximizing the log-posterior of $w$ given the data $p(w|(y_1,x_1).,(y_m,x_m)) \propto 1/Z \exp(-\|w\|_2^2)\prod_i \exp(\|y_i - \langle w, x_i\rangle - b\|_2^2)$ which you can see to be product of a Gaussian likelihood and a Gaussian prior on $w$ ($Z$ makes sure that it normalizes).

The weight vector is obtained by minimizing the sum of the two.

For example in regularized least squares you have the loss function $\sum_i \|y_i - \langle w, x_i\rangle - b\|_2^2$ and the regularizer $\|w\|_2^2$. One reason is that it does not correspond to a normalizable likelihood. Let me first answer your question in general.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed